https://www.youtube.com/watch?v=I2YHT3giwcY

Imagine that your household robot is carrying a cup of coffee from the kitchen counter to the table, where you are sitting. The robot knows what its task is---move the cup of coffee---but it does not know how you want the robot to perform that task. For instance, you might want the robot to carry the cup of coffee away from your body (since its hot, and you're worried that it could spill). Alternatively, you might want the robot to carry the cup close to the ground (so that the cup will not break if it is dropped). Human's have preferences, and different human's have different preferences! As robots trasition from structured factories to changing homes, they will need to learn these user preferences, which vary from person to person.

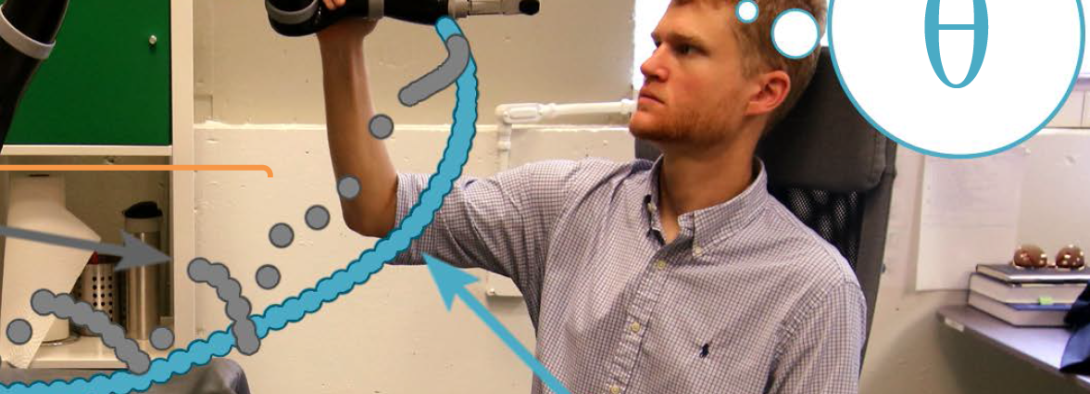

In our research, we explore how physical human interactions can be used to teach the robot how to correctly perform its task. When the human notices that the robot is doing its task incorrectly, the human can physically intervene to correct the robot. For instance, if the robot is carrying the cup too close to the human, the person might push the robot's end-effector away from their body; if the robot is carrying the coffee too high in the air, the human might pull the robot's end-effector closer to the ground. These actions aren't random or meaningless---instead, the human is intentionally interacting with the robot, and attempting to show the robot how it should behave. Thus, the robot shouldn't ignore the human's corrections, or just forget about them when the human lets go: instead, the robot should learn from physical human interactions, and change its future behavior!

We started this work by deforming the robot's desired trajectory based on physical human interactions. Here the robot has a plan, or desired trajectory, which describes how the robot thinks it should perform the task. When the human interacts, we introduced a real-time algorithm which modified, or deformed, the robot's future desired trajectory. So now---each time the human interacts with the robot---not only do they change the robot's current state, but they also affect how the robot will perform the rest of the task. This method is intuitive and easy to implement; however, the robot does not explicitly learn the human's preferences. Accordingly, we next collaborated with Prof. Anca D. Dragan at the University of California, Berkeley, where we studied how robots can learn human preferences during physical human-robot interaction. We showed how human interactions are actually observations, which provide information about how the human wants the robot to perform its task. By replanning the robot's desired trajectory after each new observation, the robot was able to generate behavior that matches the human's preference!

To get a better idea of our work, check out this video <https://www.youtube.com/watch?v=I2YHT3giwcY> and the papers included on the right!